Ask any team that has shipped an AI feature in the last two years and they will tell you it worked. Faster ticket handling. Better draft quality. Summaries that actually save time. The metrics moved. The demo looked good. Someone in leadership called it a win.

Now ask them whether those wins are compounding into something bigger. Whether the ten inference points scattered across the business are coordinating, or just coexisting. Whether anyone could tell you, today, which prompt version influenced a customer decision last Tuesday.

That is usually where the conversation gets quiet.

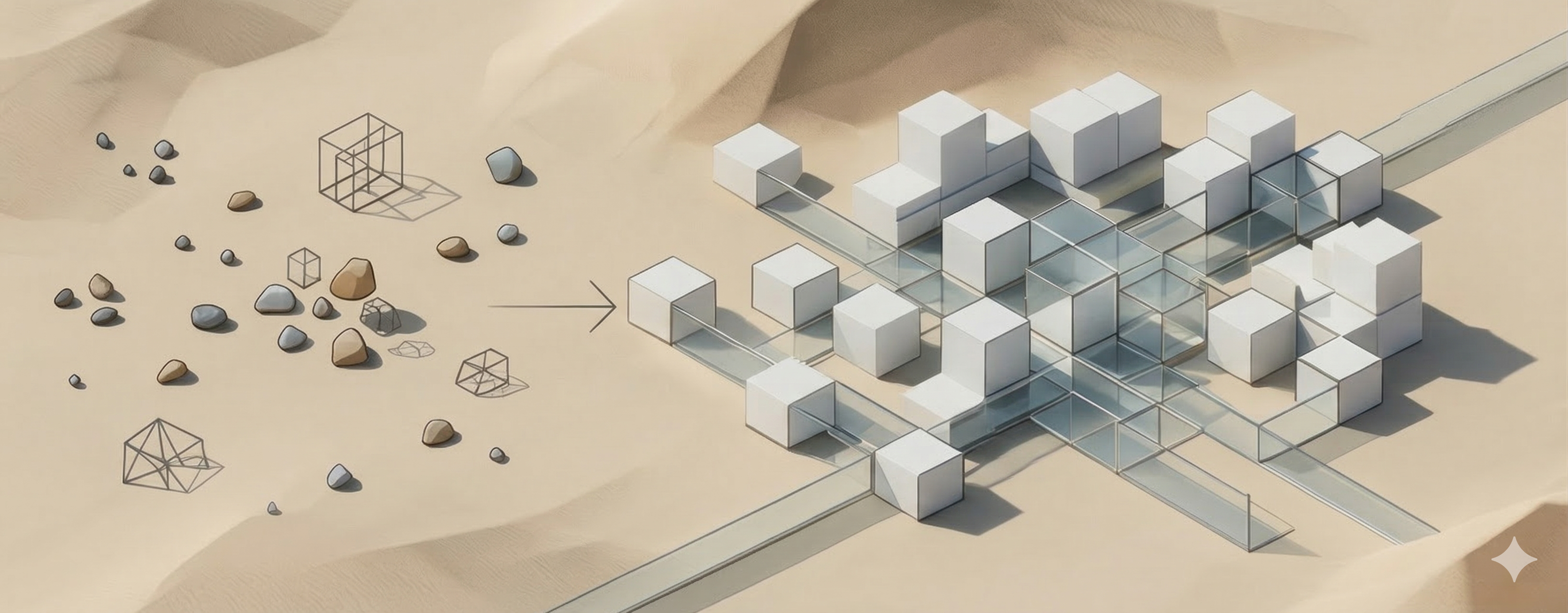

The problem is rarely the AI. It is that most organizations are building isolated inference points and calling it an AI strategy. Individual wins, no shared architecture. And the longer that continues, the more expensive the eventual reckoning becomes.

What "Atomic Inference" Actually Means in Practice

I use the term atomic inference point to describe what most enterprise AI deployments actually are: a single, self-contained LLM call, bounded by one context window, serving one function, owned by one team. Support uses one. Sales uses another. Marketing has its own. Each is optimized for its local task and largely invisible to everything else running alongside it.

Individually, there is nothing wrong with that. The issue is what happens when you have fifteen of them running in parallel with no shared logic above them. You do not get a more intelligent organization. You get the same fragmentation you had before, now automated and running faster.

The Point Where Proliferation Becomes a Problem

In most organizations, the first signs of real trouble appear six to eighteen months after the initial rollout wave. By then, the inference point count has grown faster than anyone explicitly decided it should. Each addition made sense at the time. Together, they have created something nobody designed.

A rough picture of what that looks like:

- A support assistant using a conversational embedding model to surface knowledge base articles

- A sales copilot summarizing CRM account history with a structured summarization model

- A marketing tool clustering content by topic using its own embedding layer

- An ops assistant scoring escalation urgency through a fine-tuned classifier

Each works reasonably well inside its own domain. None were designed to work with each other. There is no shared inference control plane, no aligned vector space, no coordinated latency budget. The organization has not built an AI capability - it has built four separate ones that happen to share a company name.

Under normal load - p50, the median response time - this is mostly invisible. Push the system harder, chain a few inference calls together inside a single customer journey, and the debt surfaces immediately. Latency spikes. Context gets truncated. Steps that require reasoning get skipped because there is no token budget left to reason. The quality that held up in every controlled evaluation starts degrading in production, at exactly the moments that matter most.

Dynatrace's Global CIO Report 2025 found that nearly half of AI initiatives stall before moving beyond proof of concept, with integration complexity and governance uncertainty leading the list of reasons. Not model quality. Not budget. The systems built around the models.

Design Failure One: Business Rules Encoded in Prompts

Here is something that happens in nearly every organization moving fast with AI: someone realizes they can encode a business rule in a prompt. An eligibility condition becomes a sentence. A tone requirement becomes a paragraph. A compliance constraint becomes a bullet point. The model follows the instruction. The sprint closes. Nobody files it anywhere formal.

Multiply that by twelve teams over eighteen months and you have a governance problem that looks technical but is actually organizational. Prompts are infrastructure - invisible infrastructure, which is exactly why they get treated like scratch notes instead of policy documents.

They are almost never version-controlled with the same care applied to code. They are rarely regression-tested when an underlying model gets updated. They are almost never reviewed across teams to check for contradictions. And because they live in natural language, two prompts can produce materially different outcomes while looking nearly identical to anyone reading them quickly.

The consequences show up at the decision layer. A support assistant prompted to prioritize retention recommends a goodwill credit. A pricing assistant prompted to protect margin flags the same request as ineligible. The conflict lands in a human agent's queue. They resolve it manually. Over time, the organization learns to treat AI as a suggestion engine rather than a decision layer - and that reputation, once formed, is hard to reverse.

A Better Approach: Centralized Prompt Governance

The architecture that works here is not complicated in principle. Maintain a central library of policy fragments, SOP excerpts, and tone standards. Run an extraction layer on a regular cadence that pulls the relevant portions for each function and injects them into local prompts. Teams still own their inference points - they just stop inventing the rules from scratch every time.

Condensing legal or compliance language is not a safe operation to hand entirely to an AI extraction layer. The exact phrasing of a liability clause is often the protection itself - paraphrasing it introduces risk even when the summary reads as accurate. Any extraction layer needs a validation step, and its output should be treated as a working reference, not an authoritative source. The central library is the source of truth. The extracted fragment is a convenience. When something goes wrong downstream, that distinction is what allows you to trace and correct it.

Design Failure Two: Nobody Watching for Contradictions

Even with better prompt governance, inference points encoding different business objectives will occasionally produce conflicting outputs. That is not a failure of AI - it reflects the fact that retention goals and margin goals are sometimes genuinely in tension. The question is whether anyone catches those conflicts before a customer does.

In most organizations right now, the answer is no. Each team watches their own inference point. Nobody watches the space between them.

A Consistency Supervisor architecture addresses this directly: a dedicated evaluation layer that samples cross-functional outputs, checks for semantic contradictions, and flags divergences for human review. Not a replacement for good prompt governance, but an early warning system for the gaps it leaves.

The tempting version is synchronous - route every output through a consistency check before it reaches the customer. The problem is that this adds a second probabilistic layer to every transaction, creates new latency at exactly the point where you can least afford it, and introduces its own failure mode: a supervisor that hallucinates a contradiction can stall workflows that were functioning correctly. At current model maturity, synchronous supervision at scale is overengineering the wrong problem.

Sampled, event-triggered evaluation is more practical and more honest about what the technology can reliably do today. It will not catch every conflict. It will catch enough of them early enough to matter - if, and this is the part most teams skip, the sampling rate is actually calibrated to the risk profile of what is being monitored.

"We review outputs regularly" is not a governance posture. It is a placeholder for one. The gap between a sampled batch review and a live conflict executing in production is not abstract. A pricing contradiction running at 500 customer interactions per hour, with a 24-hour batch review cycle, means up to 12,000 affected transactions before the first flag is raised. Whether that is acceptable depends entirely on the blast radius of the specific failure - which is exactly the analysis most teams have not done before accepting the asynchronous model.

Design Failure Three: Confusing Accountability Silos with Labor Silos

The standard argument against consolidating AI across functions is that departments exist for compliance reasons. Marketing, legal, and support operate under different mandates. Merging them into a generalized agent creates uncontrolled liability exposure. This argument gets made a lot, and it is not wrong.

It is also being used to defend something it does not actually defend.

There are two very different reasons an organization might run separate teams: because the accountability structures are legally or regulatorily distinct, or because the work historically required separate pools of human labor. The first reason is real and worth preserving in any AI architecture. The second is largely a constraint of pre-AI operating models.

AI replaces execution, not accountability. A well-designed system can carry out the work of a marketing writer and a customer service agent without treating a marketing commitment and a service commitment as equivalent. The distinction between those is encoded at the prompt governance and decision precedence layer - it does not require separate org chart boxes to remain enforceable.

The question most organizations are avoiding is: if AI can handle the execution work that previously required functional separation, what does the right accountability structure actually look like? That question touches headcount, job architecture, and cost structure, which is why it tends to get deferred. But deferring it does not resolve the architecture. It just means the decision gets made by default, usually in a way that recreates the fragmentation problem one layer up.

The technical challenge of re-encoding accountability is manageable. The organizational challenge is not. The real risk is not that an AI system cannot distinguish between a retention commitment and a pricing commitment. It is that the humans redesigning the system will not have agreed on who owns the decision when something goes wrong. "The AI handles it" is not an accountability structure. It is a gap waiting to become a problem. Every inference point touching a consequential decision needs a named human owner before it goes live - not as a formality, but as a design requirement.

Why These Problems Stay Hidden Until It Is Expensive to Fix Them

None of the three failures above announce themselves clearly. That is what makes them dangerous.

Each atomic inference point produces real value in isolation. The teams building them are doing good work. The metrics validate the investment. And because every individual component is performing, the aggregate picture - fragmented prompts, no cross-functional oversight, accountability gaps at decision handoffs - does not surface in any single team's reporting.

LLM-based systems also fail differently from deterministic ones. A broken rule engine throws an error. A degraded LLM workflow produces outputs that are subtly, incrementally worse. The failure accumulates quietly across thousands of interactions before it becomes visible at an aggregate level. By the time someone notices, the inference point count is high enough that untangling the architecture is no longer an engineering project. It is an organizational change program.

Most organizations have a window of roughly twelve to twenty-four months from initial deployment before proliferation makes course correction genuinely expensive. That window closes faster than most leadership teams expect.

Four Decisions Worth Making Early

Getting ahead of this does not require a platform or a reorganization. It requires treating four questions as design commitments rather than things to resolve later:

- Decision precedence. When two systems reach contradictory conclusions about the same customer, which one wins? This needs a clear answer before either system is live - not an informal escalation path that develops organically in production.

- Shared state across journeys. A customer journey crossing three functions should not restart context at each handoff. The reasoning at step three needs what happened at steps one and two. Three inference points restarting from scratch is not a journey - it is three disconnected transactions sharing a customer ID.

- Prompt governance as policy governance. Any prompt encoding a business rule is a policy artifact. It needs versioning, cross-team consistency review, and regression testing when models change. Not as overhead - as basic operational hygiene for systems making consequential decisions at scale.

- Decommissioning paths. Inference points are trivial to add and surprisingly hard to remove without a deliberate process. Legacy logic left running after its purpose has expired does not sit idle - it consumes tokens, adds latency, and introduces decision conflicts that nobody remembers designing.

What Comes Next

The three failures above are all symptoms of the same underlying condition: AI architectures organized around org chart boundaries rather than around the journeys customers actually take.

Part 2 makes the case that the customer journey is the right organizing principle for agentic orchestration - not because it is a cleaner abstraction, but because it is where the cost of semantic fragmentation becomes most visible and most measurable. From there, the path from fragmented inference points toward a coherent orchestration layer stops being theoretical and starts having concrete design decisions attached to it.

Part 1 of a three-part series. Read the full series overview here.